During the summer of 2014, I worked at the Biorobotics Lab, at the Robotics Institute of Carnegie Mellon University, under the guidance of Prof. Howie Choset. I worked on two projects while I was there -

Below is a video demo of the localization system on the IWAMP:

- Interior Wing Assembly Mobile Platform (IWAMP).

- Pole Climbing for Snake Robots.

Below is a video demo of the localization system on the IWAMP:

Interior Wing Assembly Mobile Platform

|

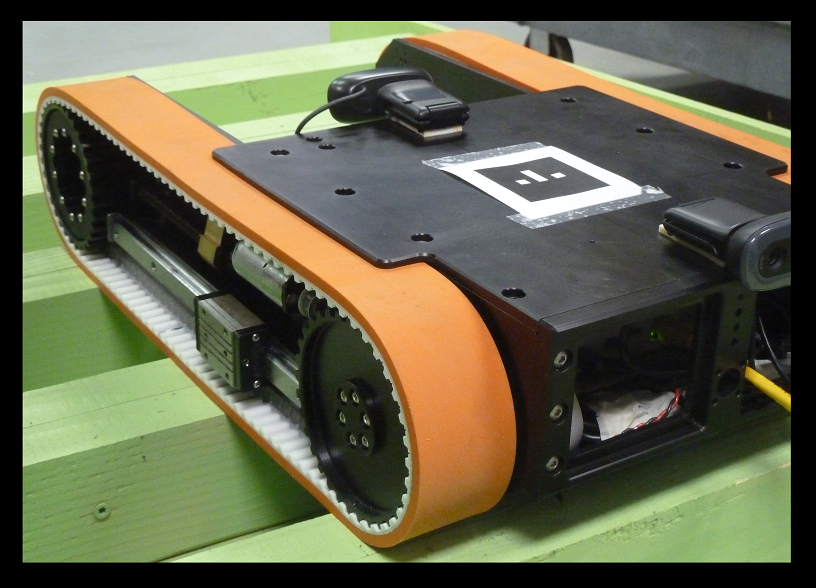

My work at the Biorobotics Lab under Prof. Howie Choset, was on the Boeing Crawler Robot, or the Interior Wing Assembly Robot. I worked along with Lu Li and Aditya Gupta on software development, and the Vision based Localization system of the Crawler. I am continuing the project as a remote collaborative exercise.

The work done so far can be classified as follows - Vision System

The vision system of the Crawler consists of a front and rear camera. For Phase-1, the rear camera was used for the Marker Tag tracking system and robot pose estimation, and the front camera was used for the CAD based localization system, specifically to perform edge detection and retrieve the position of the edges. All nodes are parametrized for ease of configuring and testing. |

Software and Process Model Development

The Crawler runs the Robot Operating System (ROS). This open architecture permits modularity, and a behavioral approach to the software development. It also facilitates parallel multi-machine processing, and a high level of abstraction of Crawler tasks. Packages were developed according to required behaviors. |

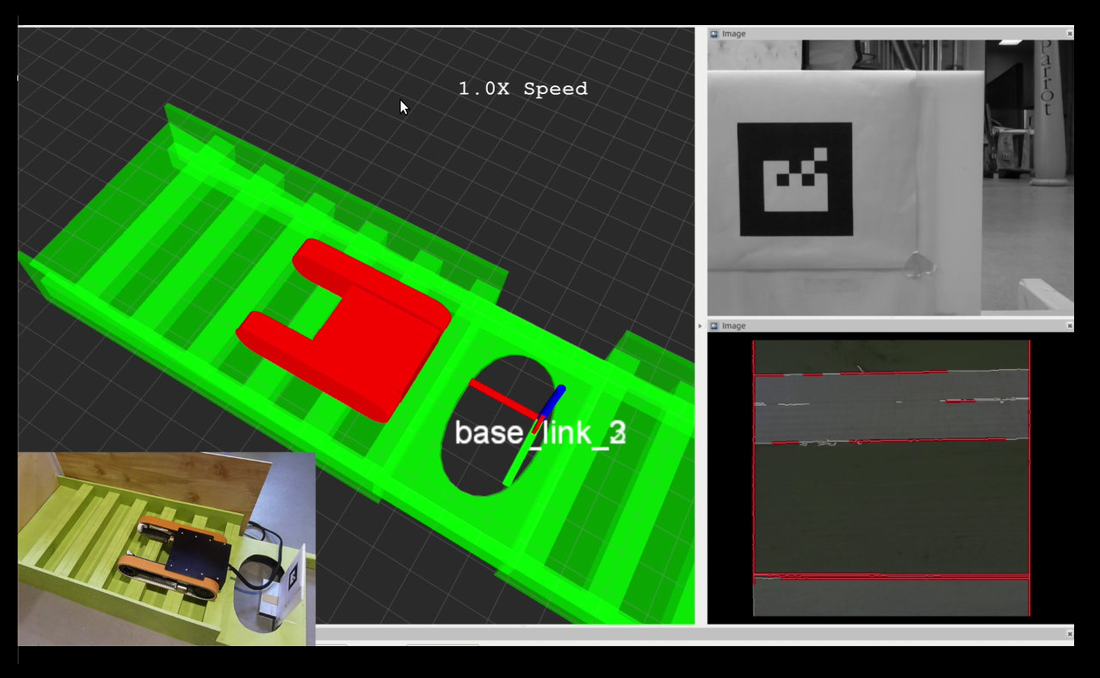

The IWAR in the wing environment.

- On the bottom left, the real prototype and environment.

- In the middle, the real time visualization of the robot.

- On the right top, the robot's rear camera feed.

- On the right bottom, the robot's front camera feed of the environment.

|

Marker Based Position Estimation

Augmented Reality (AR) tags were placed at a known position within the wing bay. The rear camera detects the tag, using existing detection algorithms, and subsequently extracts pose and orientation data. This system does not require previous robot pose knowledge. The extracted data is inverted into the Crawler-Camera frame, and a fixed response filter is implemented to smoothen out the pose estimate and eliminate noise. |

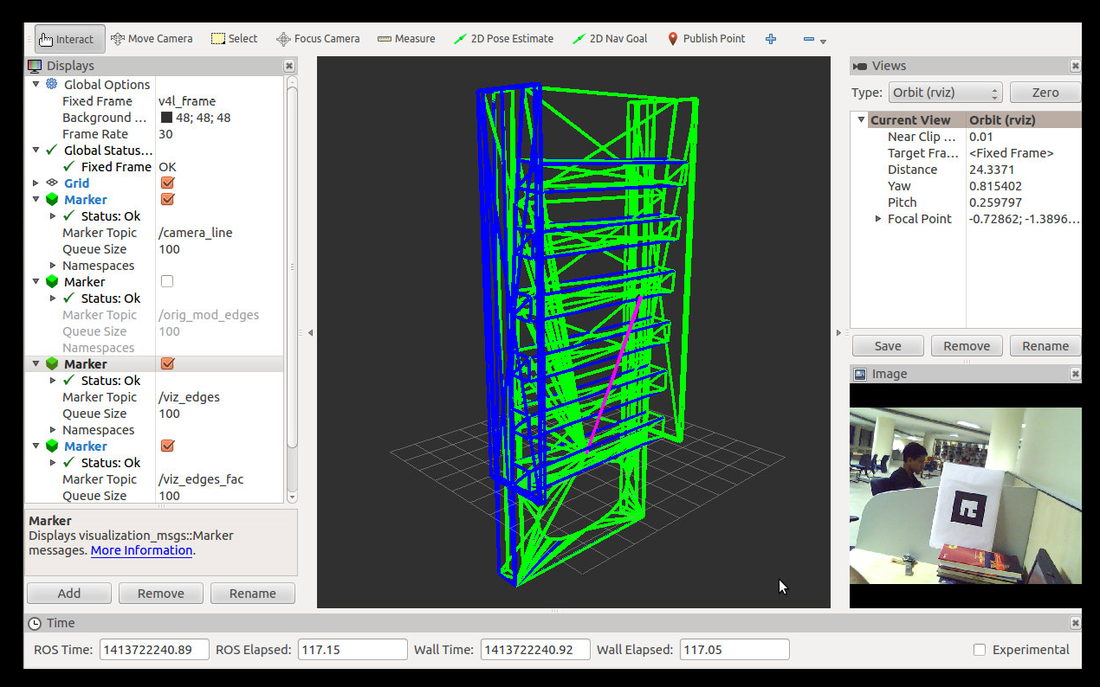

Robot-Environment Visualization

An accurate visualization system is necessary to monitor the progress of the Crawler. The wing bay CAD model is first loaded into the visualization environment. After axis-remapping and calibration, the CAD model of the Crawler is projected into the environment in accordance with the current pose estimate of the robot. The detected edges from the CAD model are also visualized for an intuitive representation of the localization. |

3D CAD based Localization

During Phase-2, a novel monocular camera and 3D CAD model based localization system was envisioned for the Crawler. Once the CAD file has been imported into a software compatible format, initial pre-processing is done. This is followed by -

During Phase-2, a novel monocular camera and 3D CAD model based localization system was envisioned for the Crawler. Once the CAD file has been imported into a software compatible format, initial pre-processing is done. This is followed by -

- Offline Image Tree approach - A projection image tree is generated offline from all possible camera locations. Edge positions are then retrieved from these images.

- Online Virtual Camera approach - Based on a previous camera pose estimates, 'virtual cameras' are created in the CAD model frame. A custom ray tracing algorithm is used to determine the visible edges (and positions) for these virtual cameras.

The visualized CAD model of the environment.

- Green lines indicate the original model.

- Blue lines indicate lines visible to the robot from the current position.

- Purple line suggests the position of the robot from the origin.

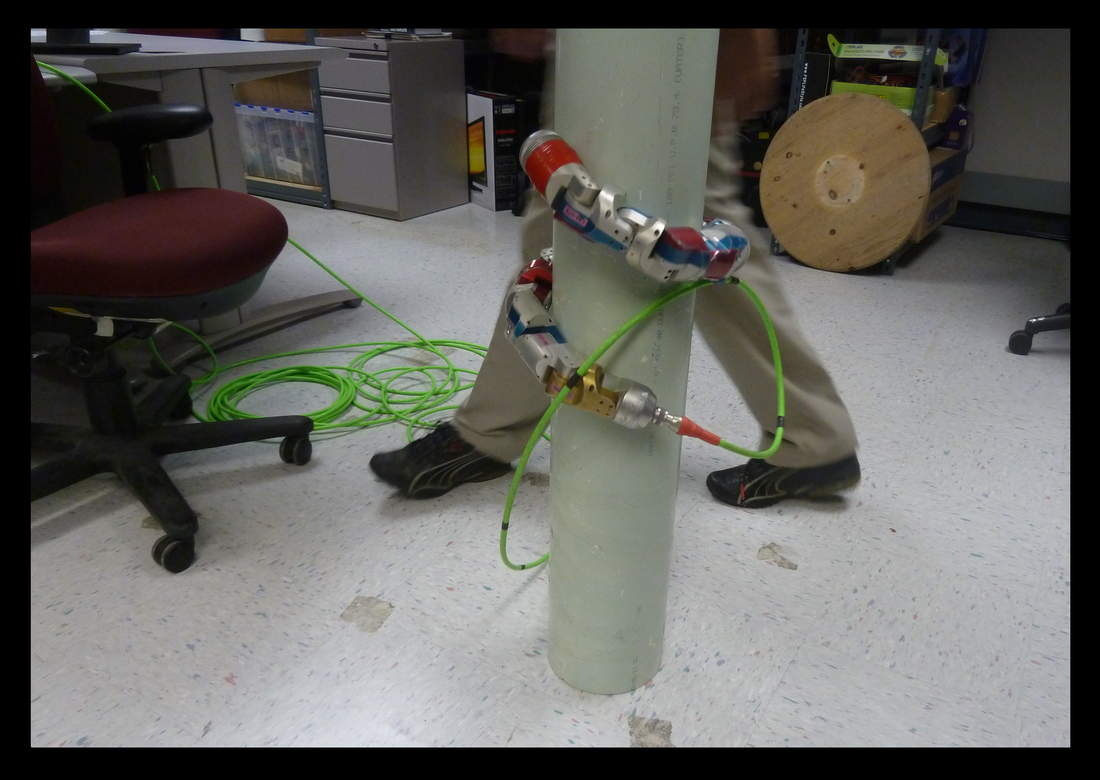

Pole Climbing for Snake Robots

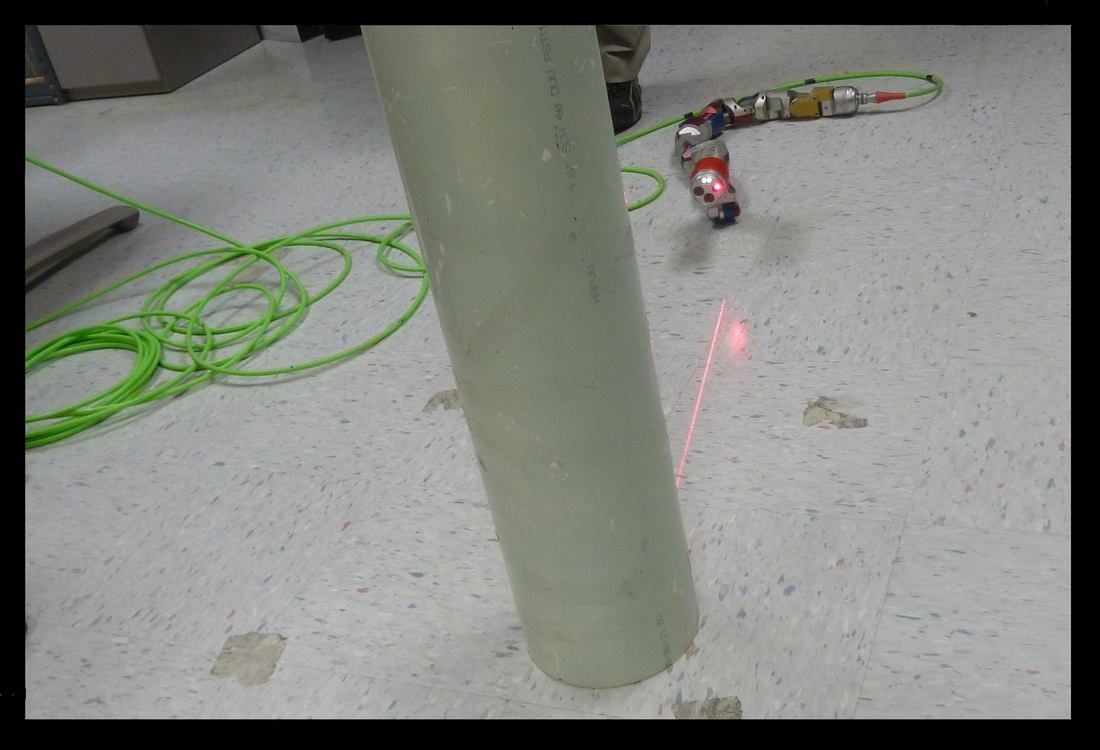

I reconfigured the pole detection and climbing behavior of snake robots at the Biorobotics Lab. My work involved the evaluation of segmentation algorithms, point clouds, structured light sensors.

|

The snake robot has a custom structured light sensor on its head, which rotates about the snake's axis, to generate a 3D point cloud of the environment around it.

Segmentation algorithms are run on the point cloud, to find the position of any poles nearby the snake. Once a pole is found, the snake first adopts a conical-sidewinding gait to approach the pole, and subsequently rolls around it. For climbing the pole itself, a compliant rolling gait is used. |

|

In order to improve the performance of the behavior, I first set up a measure of resolution and accuracy of scanning and motion towards the pole.

Custom parameters were tuned in the Snake control GUI for which the snake successfully detected and climbed a variety of poles. The exercise was conducted over a set of textures, illumination, ranges, and pole sizes. |

The definition of resolution and accuracy for the pole detection was done as follows.