Hierarchical Reinforcement Learning for Sequencing Behaviors

A significant thrust in recent robotics literature addresses how to perform tasks by sequencing skills that abstract out lower level details of robot control. This paradigm of hierarchical learning has existed in the context of reinforcement learning in the options framework, but is gaining momentum again with the advent of deep reinforcement learning, and parameterizing policies as deep neural networks.

During the Deep Learning course at CMU, I explored the idea of Hierarchical Reinforcement Learning in robot navigation.

Overview

Given a set of primitive behaviors that mutually exclusively address parts of a large task, the question we seek to address is - "Can we learn to sequence primitive behaviors to achieve a larger task, lacking explicit supervision?"

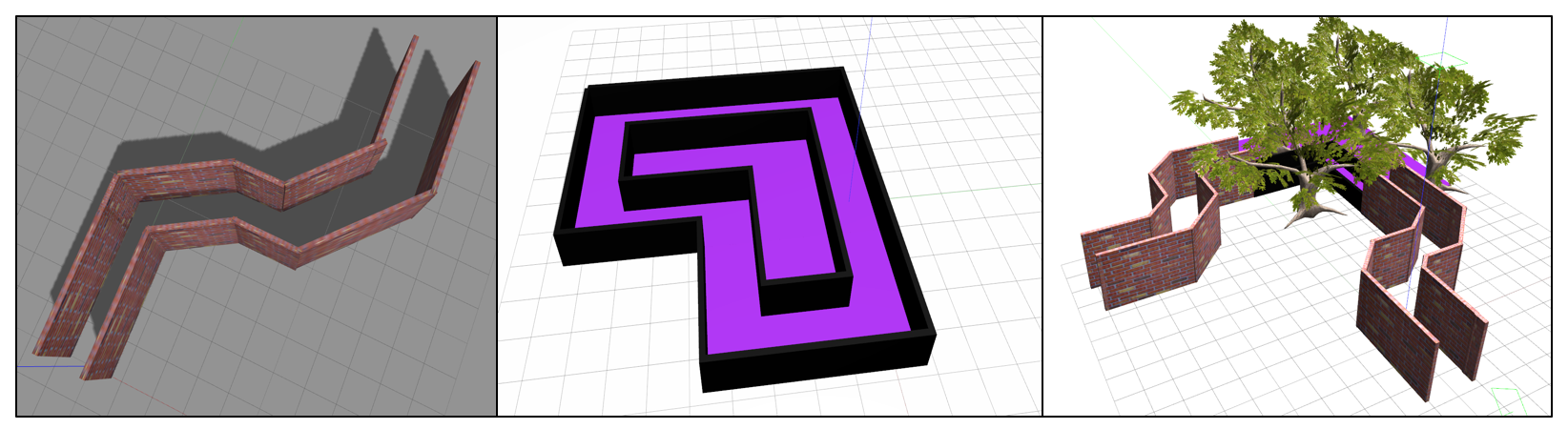

Central to our project is this idea of hierarchical reinforcement learning (HRL), where an agent learns a hierarchy of control policies. In our approach we consider two such levels: the low-level control policies select a robot action (such as move forward, left, or right) when provided with a perceptual input (i.e. raw camera pixels). We train several low-level policies to navigate a robot in different elementary environments.

The higher level meta-policy then selects which of these lower level policies to apply over an extended temporal period. This meta-level policy is trained over the compound environment. Ideally, the meta-policy learns to select the low-level control policy over the elementary environment over which it was trained. Thus the meta-level policy learns a sequence of low-level policies to execute to maximize the cummulative reward. Diagrammatically, we present a schematic of our hierarchical reinforcement learning in Figure 4. The meta level policy (depicted in blue) simply selects which of the control policies (in green) to apply.

During the Deep Learning course at CMU, I explored the idea of Hierarchical Reinforcement Learning in robot navigation.

Overview

Given a set of primitive behaviors that mutually exclusively address parts of a large task, the question we seek to address is - "Can we learn to sequence primitive behaviors to achieve a larger task, lacking explicit supervision?"

Central to our project is this idea of hierarchical reinforcement learning (HRL), where an agent learns a hierarchy of control policies. In our approach we consider two such levels: the low-level control policies select a robot action (such as move forward, left, or right) when provided with a perceptual input (i.e. raw camera pixels). We train several low-level policies to navigate a robot in different elementary environments.

The higher level meta-policy then selects which of these lower level policies to apply over an extended temporal period. This meta-level policy is trained over the compound environment. Ideally, the meta-policy learns to select the low-level control policy over the elementary environment over which it was trained. Thus the meta-level policy learns a sequence of low-level policies to execute to maximize the cummulative reward. Diagrammatically, we present a schematic of our hierarchical reinforcement learning in Figure 4. The meta level policy (depicted in blue) simply selects which of the control policies (in green) to apply.