Intelligent Vision System for Blind Enablement.

Several million people in the world suffer from partial or total visual impairment. InViSyBlE is a wearable assistive device for visually impaired individuals. It incorporates multiple behaviors, to assist users in a variety of functions, and help them carry out independent lives.

We published a paper on INVISyBLE:

We published a paper on INVISyBLE:

- Tanmay Shankar, Abhijat Biswas, and Venkat Arun, "Development of an Assistive Vision System."

9th International Convention on Rehabilitation Engineering and Assistive Engineering - iCREATe 2015.

The behaviors that InViSyBlE is currently capable of are described below.

|

Object Detection and Pose Estimation:

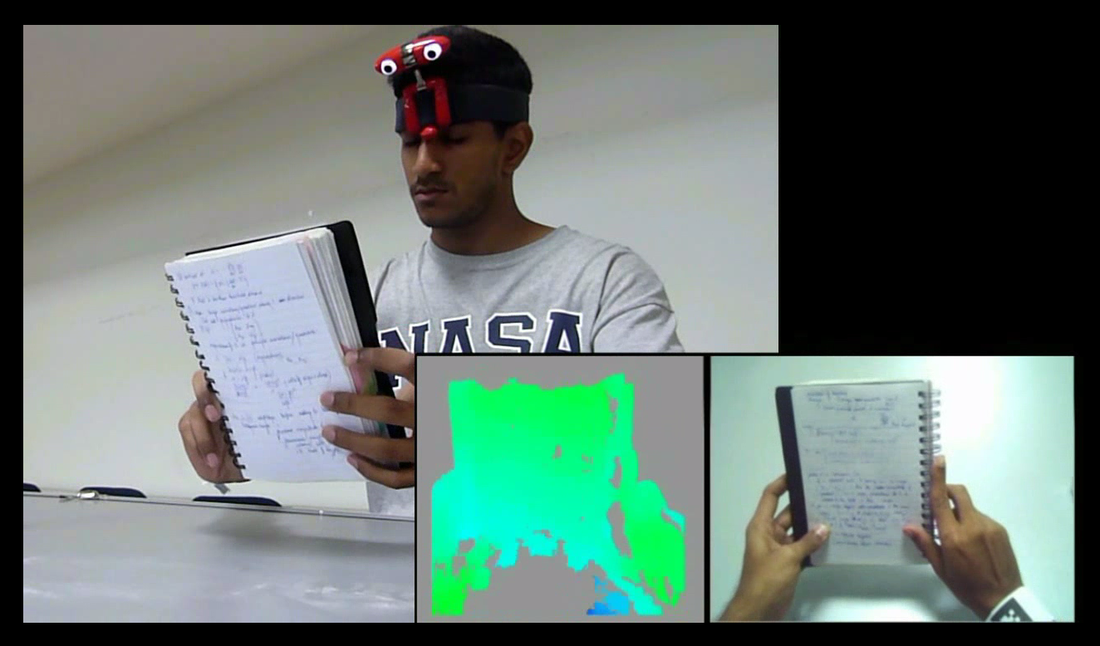

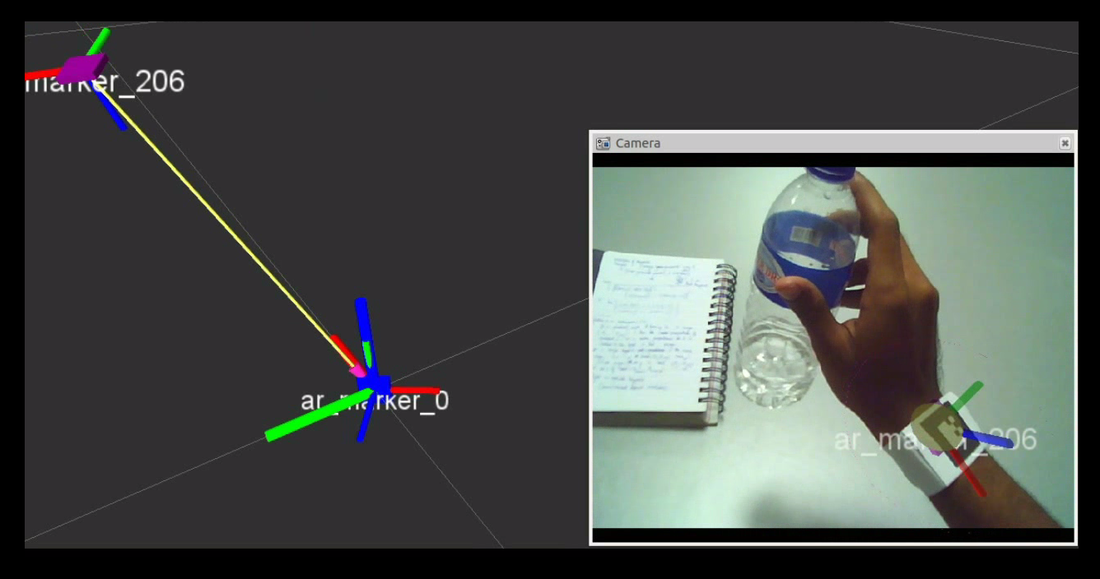

Given a typical form of the object that the user is trying to pick up, image segmentation may be performed on the disparity map obtained from the stereo feed. This would return the position and orientation of the object with reference to a pre-defined reference frame. If the user utilizes the AR Tag hand bracelet, the object pose may be returned relative to the hand of the user. While it is currently targeted towards audio outputs, haptic feedback mechanism is more intuitive for this behavior. Obstacle Detection:

Background subtraction combined with the stereo depth data may be used to define obstacles on a flat path, thus alerting the user that there is a discontinuity in the terrain involved. |

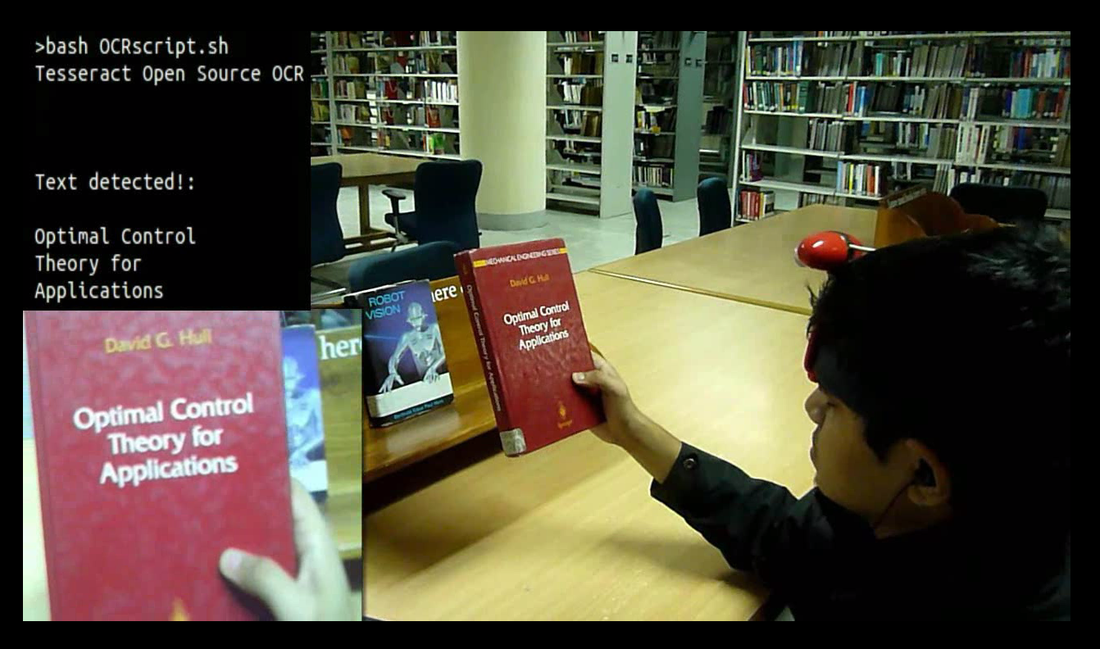

Text Detection and Recognition:

When enabled, the second behavior is designed to automatically store text in the camera field that is within a certain range, and then convert it to a speech output that the user can listen to. Google's robust OCR engine, tesseract was adapted by us for this particular behavior. |

|

Face Detection and Recognition:

When the behavior is enabled, face detection is run on the input camera feed. Once a face is detected, a region of interest is identified, which is subject to a face recognition algorithm. The database of faces to be used may potentially be obtained by interfacing the device with social networking profiles of the user, so as to retrieve friends and acquaintances of the user. The audio output would provide ID and location of the person. |

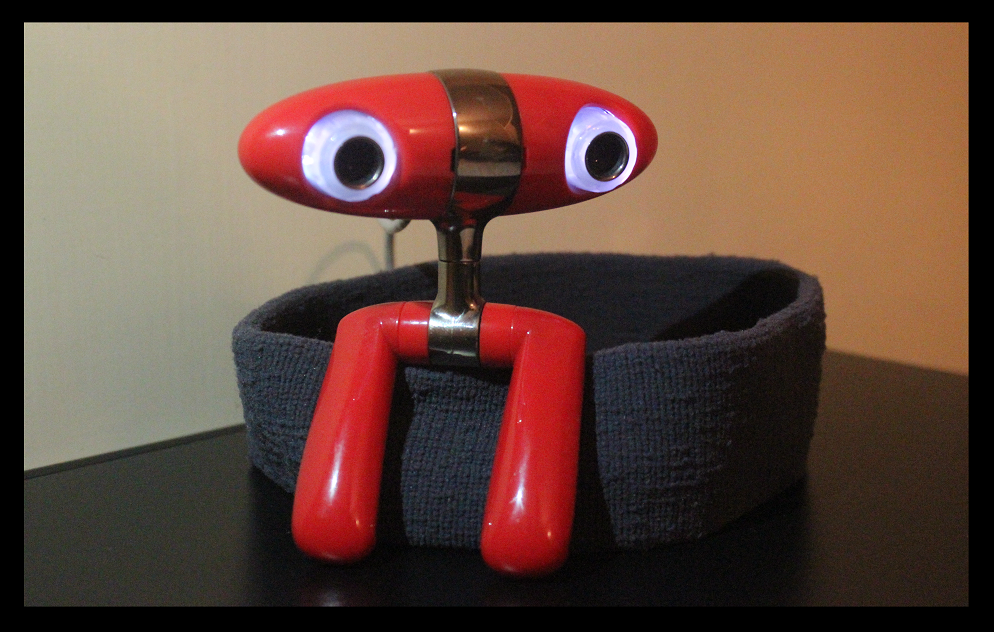

Hardware design:

The device consist of a light-weight head mounted stereo-camera setup, which is interfaced with a mobile phone (an Android platform) or similar portable computing device. The phone also provides a audio output from the standard earphone output. In addition to this, a secondary haptic interface is proposed. The user would also wear a paper bracelet with AR Tags or Markers, for usage in some of the behaviors.

Software design:

A series of behaviors is to be implemented. The user would be able to toggle between which behavior is active through a haptic interface. To ensure portability and reusability of the device, all processing is to be done on an Android phone, with open source libraries (OpenCV). The first iteration of the device would be capable of assisting the user through the following behaviors:

If found feasible, additional behaviors would be implemented, including -

The device consist of a light-weight head mounted stereo-camera setup, which is interfaced with a mobile phone (an Android platform) or similar portable computing device. The phone also provides a audio output from the standard earphone output. In addition to this, a secondary haptic interface is proposed. The user would also wear a paper bracelet with AR Tags or Markers, for usage in some of the behaviors.

Software design:

A series of behaviors is to be implemented. The user would be able to toggle between which behavior is active through a haptic interface. To ensure portability and reusability of the device, all processing is to be done on an Android phone, with open source libraries (OpenCV). The first iteration of the device would be capable of assisting the user through the following behaviors:

If found feasible, additional behaviors would be implemented, including -

- Motion detection and tracking

- Traffic light detection